What is M3-Agent?

Entity-Centric Memory for AI Systems

TL;DR

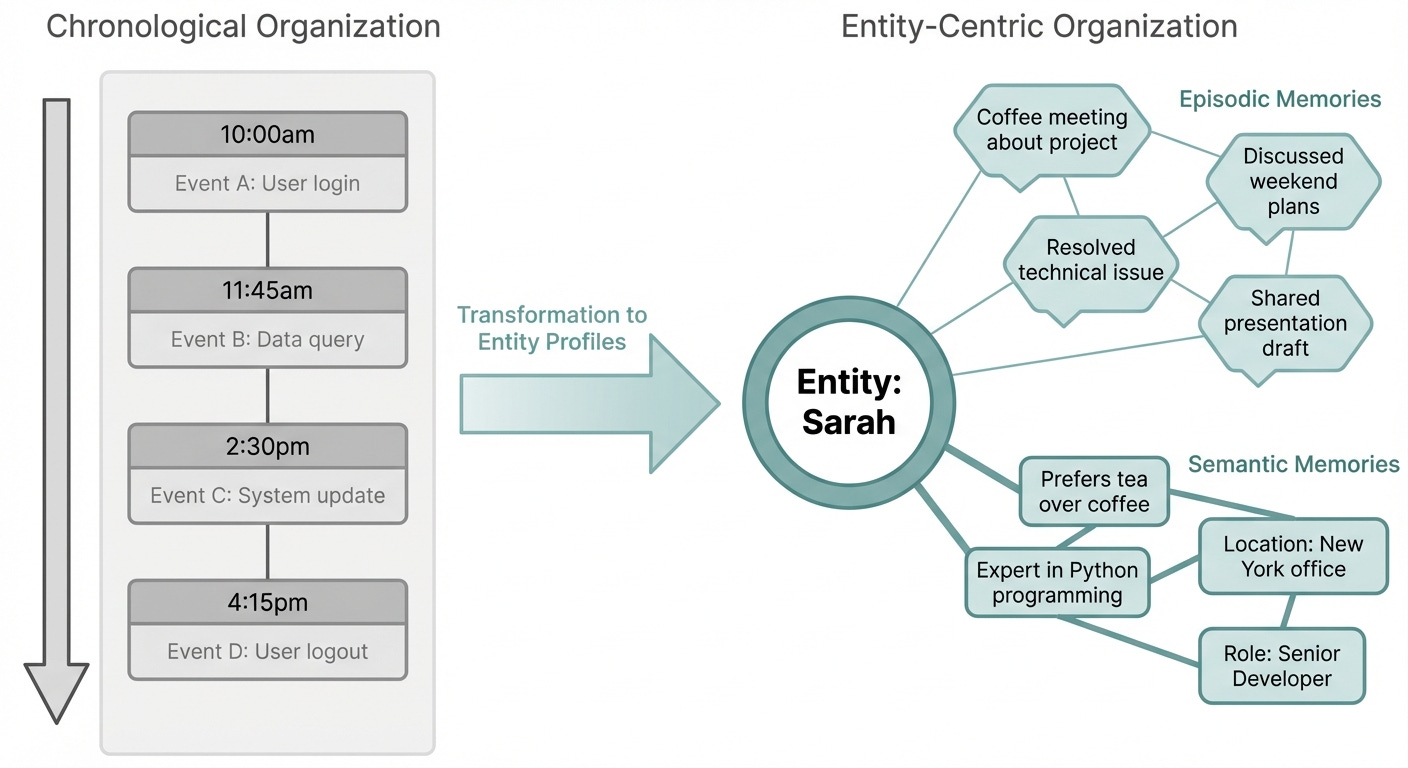

M3-Agent processes visual and auditory streams in real time, organizing memories around entities (people, objects, places) rather than timestamps, enabling systems to ask “what do I know about Sarah” instead of “what happened at 2:15pm.”

The system builds episodic memories (dated moments linked to entities) and semantic memories (structured knowledge about who they are and what they care about) directly from sensory streams, not text transcripts.

Entity-centric organization becomes practical when managing hundreds of entities. Systems no longer reconstruct knowledge by stitching scattered timestamps. They maintain active, continuously-updated profiles.

How do you actually remember things? You don’t store memories by timestamp. You organize them around people, objects, and places. You remember Sarah: her priorities, what she’s mentioned before, her role in your team, what she cares about. You organize memories around her as an entity, not around the moments when she appeared on your calendar.

M3-Agent inverts how AI systems typically organize memory. Most approaches work chronologically: Event A at timestamp 1, Event B at timestamp 2. When you want to know what you learned about Sarah, you search the timeline. When you want to know about a specific object or place, you dig through minutes or hours of history.

M3-Agent makes entities the organizing principle. It processes visual and auditory streams in real time, extracts information about the entities that appear (who, what, where), and builds structured knowledge around them. This simple shift in organization changes what becomes easy to ask and what becomes hard.

The framework comes from recent research titled “Seeing, Listening, Remembering, and Reasoning: A Multimodal Agent with Long-Term Memory,” and it represents a fundamental change in how AI systems build and retrieve understanding.

How most agents fail at memory

Think about how you actually remember someone. You don’t recall “at 2:15pm on Tuesday, a person entered the room.” You remember John: what he cares about, what he’s mentioned before, his role in your team, what he usually orders for lunch. You organize memories around him, not around timestamps.

Most memory systems get this backwards. They organize chronologically. Event A happened at timestamp 1. Event B happened at timestamp 2. If you want to know what you learned about John, you have to dig through the timeline. You have to search across multiple timestamps, multiple conversations, multiple contexts.

This works fine for document retrieval or conversation history. It breaks down fast when you’re trying to understand a complex environment over weeks or months. A robot learning a factory floor doesn’t care when it first saw a particular conveyor belt. It cares about the belt itself: what it does, when it breaks, how to interact with it. A meeting assistant doesn’t need to remember “at 3pm, someone said X.” It needs to remember “Sarah always objects to aggressive timelines” or “Tom handles all logistics questions.”

M3-Agent inverts this. It organizes memory around entities.

What entity-centric memory actually looks like

An entity in M3-Agent’s framework can be a person, an object, a location, or any concept that persists in the world. The system builds two types of memories for each entity:

Episodic memory captures time-ordered experiences. For Sarah, it might log: “Tuesday 2:30pm, raised concerns about resource allocation. Thursday 10am, mentioned she’s overseeing Q2 planning.” These are specific, dated moments linked to her.

Semantic memory abstracts the general knowledge. From those episodes, the system learns: “Sarah prioritizes resource constraints. She owns Q2 planning. She tends to raise concerns early in projects.” This is structured knowledge about who she is, what she cares about, what she controls.

The key difference from text-based systems: M3-Agent maintains these memories by processing visual and auditory streams directly. It’s not converting video to text transcripts and then storing transcripts. It’s analyzing the streams themselves, extracting what matters about each entity, and building memories that reflect what it actually observed.

This sounds subtle. But the implications are significant.

A text-based system might miss that Sarah spoke with particular emphasis or body language that signals frustration. A speech-to-text pipeline might misheard a technical term. A video-to-text converter might describe “someone in a blue shirt” instead of recognizing it was the same person from yesterday’s meeting. M3-Agent doesn’t lose these details in translation. It processes the real sensory data and grounds its memories in what actually happened.

The architecture behind real-time multimodal understanding

M3-Agent’s system has to do several things simultaneously. It ingests visual and auditory streams in real time. It recognizes entities in those streams (who is this person, what is this object). It links current observations to past memories about those entities. And it updates both episodic and semantic memories as new information arrives.

The real-time requirement changes everything. You can’t batch process. You can’t wait for a full conversation transcript. You’re making sense of the world as it unfolds.

The framework handles this by maintaining active entity profiles. As it observes, it tags what it’s learning against specific entities. Visual input: “That’s John entering the conference room.” Audio input: “John is saying we need to cut budget by 20%.” Memory update: episodic entry for John at this timestamp, semantic update to John’s profile (he cares about budget, he sees cost cutting as necessary).

Over days and weeks, these profiles become rich. The system doesn’t just know John’s name. It knows his priorities, his concerns, his communication style, what roles he takes in meetings, which other entities he interacts with frequently, how his views evolve over time.

Why entity organization matters

The organizing principle of a memory system shapes what becomes easy to ask and what becomes hard. Chronological organization makes sense for “what happened when.” Entity organization makes sense for “what do I know about this person/object/place.”

This distinction gets clearer at scale. If you’re managing hundreds or thousands of entities (meeting participants, warehouse objects, security camera identities), chronological search breaks down fast. You end up reconstructing entity knowledge by stitching together scattered timestamps. You’re not querying what you know about Sarah. You’re searching for “all mentions of Sarah” across time and context, then synthesizing.

Entity-centric storage means this information is already structured. You maintain an active profile for Sarah. Updates to her profile happen continuously as new observations arrive. When you query, you’re not searching. You’re retrieving structured knowledge that’s already built.

The architectural difference is subtle. The practical difference is significant.

Real applications starting now

This isn’t theoretical. Entity-centric multimodal memory enables concrete systems today.

Meeting intelligence. A system that joins your meetings, watches participants, and builds entity profiles for each person. Not just “Sarah spoke at 2:30pm.” Rather: Sarah’s communication style, what she consistently cares about, what roles she takes in decisions, which other participants she defers to, how her positions evolve across meetings. A query like “which team members have worked together on budget topics” is immediate. You’re not searching timestamps. You’re querying built entity profiles with relationship tags.

Robotics and embodied AI. A robot learning a warehouse. It builds entity profiles for pallets: which are fragile (learned through observation and failure), which contain high-value items, which ones always get routed to the same workstation. It profiles equipment: which conveyor sections jam regularly, which tools need lubrication, which machinery is approaching failure. It doesn’t just log events. It maintains growing knowledge about specific objects, updated continuously as the robot interacts with them.

Supervised environments. A system managing a secure facility or controlled site. It builds entity profiles for people, vehicles, equipment. It knows who normally works in which areas, what equipment is typically on the loading dock, which vehicles make regular deliveries. Anomalies become obvious because the system knows what normal looks like for each entity. A stranger in a secure area isn’t just a detected person. It’s a deviation from established entity behavior.

Personal AI assistants. An assistant that actually knows your world. It builds profiles for people you interact with regularly: their work style, what matters to them, their preferences. It knows your physical spaces: your office layout, your usual routes, where you typically sit in meetings. It knows your objects: your laptop, your preferred meeting tools, your coffee mug. Memory isn’t something you tell it to write down. It’s something that builds through continuous observation.

The engineering challenge ahead

M3-Agent’s framework is elegant in concept. In practice, it requires solving hard problems.

Real-time processing of video and audio streams is computationally expensive. You need efficient encoding. You need fast entity recognition and linking. You need to update memories without lag. Systems that process everything locally have to fit on available hardware. Systems that use cloud processing add latency and privacy concerns.

Entity recognition at scale is non-trivial. As the system observes more and more entities, maintaining distinct profiles without collapse or confusion becomes harder. How do you know if two observations refer to the same entity or different ones? This works well in controlled environments. It gets harder in open worlds.

Memory scaling is real. Episodic memories grow unboundedly if you’re capturing everything. You need intelligent summarization and archival. You need to remember what matters and let less relevant details fade. Humans do this naturally. Systems have to learn it.

What this tells us about the future of AI agents

M3-Agent represents a step toward agents that actually inhabit the world rather than just process text about it. It’s not the final form, but it’s pointing in the right direction.

The agent landscape has been dominated by large language models that work with text. They’re powerful but fundamentally disembodied. They don’t see. They don’t hear. They don’t have persistent interaction with anything real.

Systems like M3-Agent suggest the next generation will be different. Multimodal from the ground up. Continuous learning from sensory streams. Memory organized around understanding rather than chronology. Genuine long-term relationships with people, objects, and environments.

This doesn’t mean replacing language models. It means putting them in a richer context. Language reasoning is still valuable. But it’s one capability among others. The agent also perceives. It remembers. It updates its understanding based on observation.

We’re not quite there yet. Current systems have limitations. But the direction is clear. The AI agents that matter in the next few years won’t be the ones that answer questions best. They’ll be the ones that understand your world best.