What is Agentic Reasoning?

A new weekend deep read for those tracking the future of autonomous AI systems.

For years, interacting with AI felt like consulting a brilliant but static reference library. You provided a prompt—”Write a Python function to parse JSON”—and received exactly what you asked for: functional, precise, and confined to the boundaries of your request. Traditional large language models excelled at these isolated tasks, generating code, summarizing text, or translating languages with remarkable fluency. Yet they remained fundamentally reactive, waiting passively for the next instruction like a calculator awaiting the next equation vldb.org.

Agentic AI represents a fundamental departure from this paradigm. Instead of simply responding to “Write a Python function,” imagine asking, “Build me a research proposal,” and watching as the system springs into action—identifying relevant literature, outlining methodology, drafting sections, and even recognizing gaps in its own knowledge to seek clarification or additional data arxiv.org. This shift from reactive completion to proactive problem-solving marks the transition from passive tools to what researchers call “active decision-makers” capable of exercising agency to anticipate user needs and solve them autonomously across longer time horizons arxiv.org, arxiv.org.

To grasp this evolution, consider the junior operator metaphor. A traditional LLM is like a sophisticated calculator: powerful, accurate, and entirely dependent on you to push the right buttons. It executes brilliantly but lacks initiative. An agentic AI, by contrast, functions like a capable junior operator who listens to your high-level goals—”Prepare our quarterly report”—and then independently breaks down the task, searches for data, creates visualizations, drafts analyses, and returns with a complete proposal, flagging uncertainties and suggesting next steps without constant micromanagement. Rather than awaiting instruction, these systems proactively identify bottlenecks, plan multi-step workflows, and adapt their strategies based on environmental feedback arxiv.org, arxiv.org.

This transformation isn’t happening all at once. We’re witnessing a three-stage evolution that mirrors how human expertise develops. First, basic agents emerged—systems that could use tools and execute single, defined tasks with increasing competence, essentially moving beyond simple retrieval-augmented generation to interact with external environments vldb.org. Next came adaptive systems, capable of learning from feedback, refining their approaches, and adjusting to new contexts through sophisticated adaptation mechanisms that span both how agents improve themselves and how they utilize external tools arxiv.org. Finally, we’re now entering the era of collaborative ecosystems, where multiple specialized agents orchestrate complex workflows alongside human scientists, bridging natural language, computer code, and physical experimentation in multi-agent systems that solve problems no single model could tackle alone arxiv.org.

As we stand at this inflection point, understanding agentic AI requires more than appreciating individual model capabilities—it demands a systems-level perspective on how these agents interact with environments, tools, and each other to produce emergent intelligence greater than the sum of their parts arxiv.org. The dawn of active intelligence isn’t just about smarter algorithms; it’s about creating autonomous systems that can truly operate in the wild, anticipate needs, and collaborate in ways that fundamentally reshape how we approach complex knowledge work.

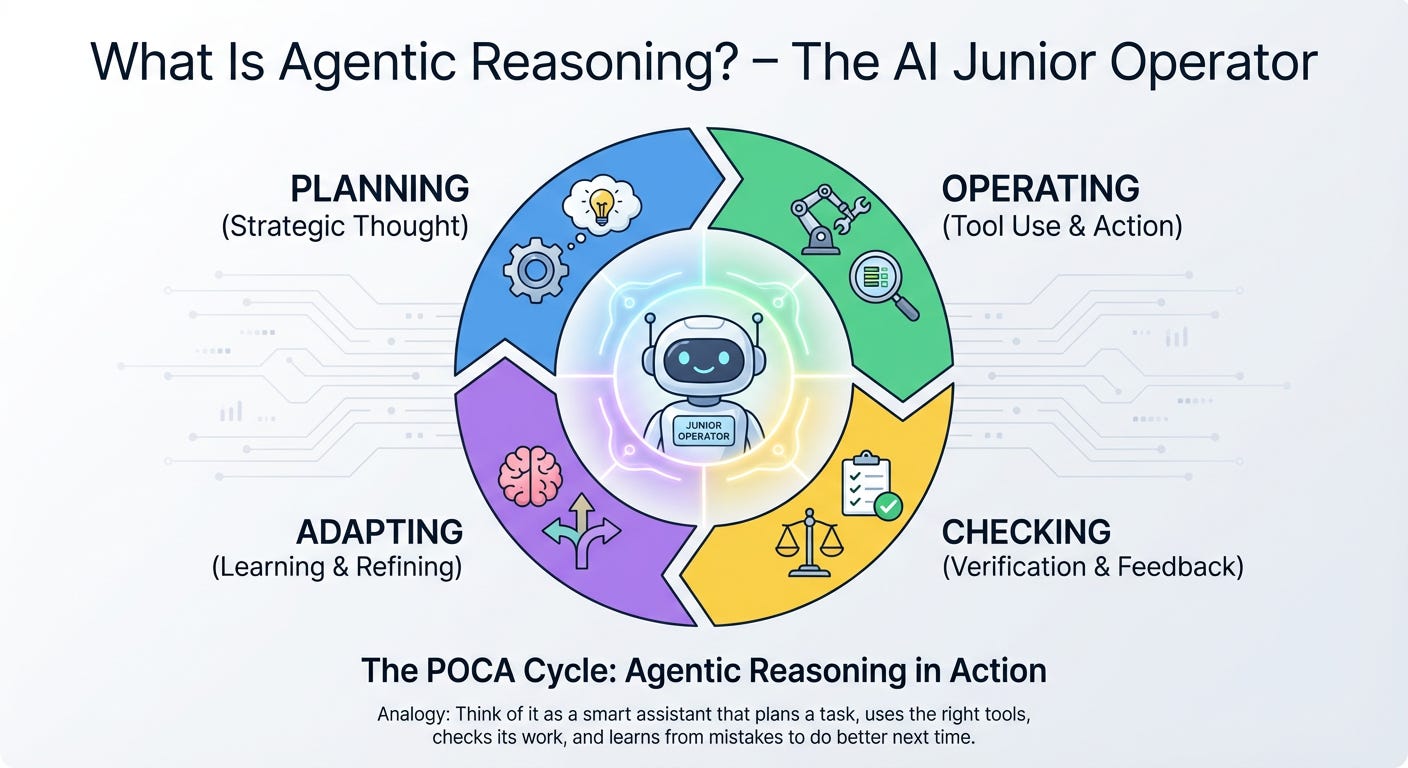

What Is Agentic Reasoning? – The AI Junior Operator

Imagine walking into a control room and finding not a veteran master technician who simply recites manuals from memory, but a junior operator—someone who cannot possibly know everything, yet knows exactly how to find out, fix what breaks, and keep the machinery running until the job is done. This is the essence of agentic reasoning: the capacity of an artificial system to sustain purposive activity across time, not by retrieving static facts, but by recursively generating, evaluating, and revising its own goals while interacting with the world frontiersin.org.

Formally, agentic reasoning marks a departure from traditional AI architectures that optimize externally specified objectives. Instead, it constitutes what researchers call synthetic purposiveness—an engineered capacity for “goal-directed reasoning and purposive orchestration” in which the system maintains recursive loops of perception, evaluation, goal-updating, and action frontiersin.org. Rather than functioning as a passive encyclopedia, the agent operates as a tool-use decision-maker, treating internal reasoning and external actions as equivalent epistemic instruments to efficiently acquire knowledge and complete tasks arxiv.org.

At the heart of this capability lies the POCA cycle—a continuous cognitive loop that transforms large language models from static text generators into dynamic problem-solvers:

P – Planning: Before touching a single lever, the junior operator maps the territory. Planning involves decomposing a high-level objective into sub-goals and anticipating the knowledge gaps that stand between the current state and the desired outcome. This is the phase of synthetic teleology, where the system generates its own “why” and “what next” frontiersin.org. Unlike simple prompt-response chains, agentic planning incorporates long-horizon reasoning, allowing the system to strategize across multiple temporal scales arxiv.org.

O – Operating (Tool Use): When the operator encounters a valve they cannot turn alone, they grab the right wrench. In agentic systems, operating means autonomously deciding when, how, and which external tools to invoke—whether searching a database, executing code, or querying a sensor. Frameworks like ARTIST demonstrate that when models learn tool integration through reinforcement learning, they develop robust strategies for environment interaction without step-level human supervision, leading to deeper reasoning and more effective tool use microsoft.com. The agent treats tool use as an epistemic decision: “Do I know this internally, or must I act to find out?” arxiv.org.

C – Checking (Verification): A good junior operator double-reads the gauges. The checking phase introduces metacognitive awareness—the system’s ability to verify intermediate results, detect inconsistencies, and assess whether its current trajectory actually leads toward the goal. This verification step is crucial because agentic AI operates in open-ended environments where self-deception and hallucination remain persistent risks arxiv.org. By maintaining teleological coherence—alignment between actions and stated objectives—the system prevents drift and ensures that each operation genuinely serves the broader mission frontiersin.org.

A – Adapting (Correction): When the pressure reading spikes unexpectedly, the operator adjusts the plan, not just the dial. Adapting involves the recursive revision of goals and strategies based on environmental feedback. This is where agentic reasoning diverges most sharply from deterministic algorithms: the system engages in adaptive recovery and reflective efficiency, rewriting its own objectives when the context demands it frontiersin.org. Through outcome-based reinforcement learning, the model learns not just to execute correctly, but to recognize when the execution itself requires structural change microsoft.com.

The significance of this transition cannot be overstated. Traditional LLMs, for all their encyclopedic knowledge, remain fundamentally constrained by “static internal knowledge and text-only reasoning”—glorified retrieval systems that answer questions but do not complete missions microsoft.com. Agentic reasoning represents the shift from knowledge retrieval to goal completion: from answering “What is the capital of France?” to orchestrating “Plan my multi-city European business trip within budget, accounting for real-time flight delays, and reschedule my meetings accordingly.”

This paradigm shift reclaims agency as a “first-class construct in artificial intelligence,” moving us beyond algorithmic optimization toward systems capable of genuine goal-directed reasoning frontiersin.org. The junior operator does not need to have all the answers stored in memory; they need only the reasoning infrastructure to find them, verify them, and adapt when the machinery of reality refuses to cooperate.

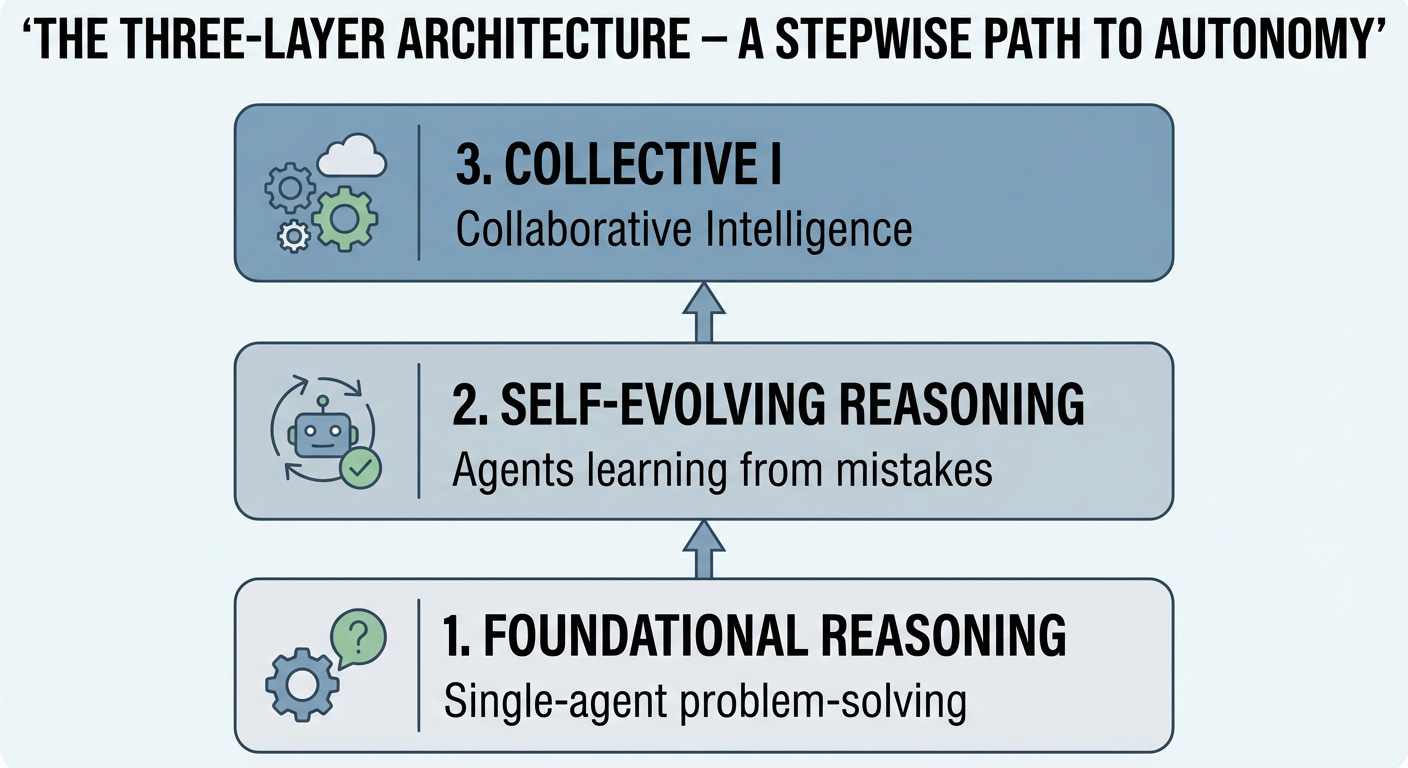

The Three-Layer Architecture – A Stepwise Path to Autonomy

Think of building an AI agent like growing a startup. You don’t begin with a fully staffed company; you start with a single hire, help them level up through trial and error, and eventually assemble a team where the whole becomes greater than the sum of its parts. The research taxonomy maps neatly onto this journey, breaking autonomy into three distinct stages of maturity.

1. Foundational Reasoning: The Solo Intern

At the base layer sits the solo intern—a single agent equipped with basic reasoning capabilities and access to tools, but largely dependent on explicit instructions. Like an entry-level employee on their first day, these systems handle discrete, well-defined tasks: looking up data, drafting emails, or running calculations. They follow scripts, use available resources, and solve problems within narrow boundaries, but they don’t yet learn from their mistakes or collaborate with peers. This corresponds to the reactive and context-aware stages of agentic behavior vellum.ai, where the system processes information and acts upon the environment to meet immediate objectives emergentmind.com, yet lacks the adaptivity to improve without human intervention.

2. Self-Evolving Reasoning: The Self-Taught Expert

Once the intern masters the basics, they enter the self-taught expert phase. Here, the agent develops the ability to learn from outcomes and refine its own decision-making processes—what researchers call metacognitive awareness emergentmind.com. Like an employee who studies their failures and iterates on their approach without waiting for a manager’s feedback, these agents engage in self-improving loops. Recent frameworks such as Agent0 demonstrate this dynamic through “curriculum agents” that propose increasingly difficult challenges and “executor agents” that adapt to solve them, creating a self-reinforcing cycle of capability growth arxiv.org. This layer represents the transition from goal-oriented behavior to genuine self-improvement vellum.ai, where the system begins to shape its own training data and reasoning strategies through tool-integrated reflection.

3. Collective Intelligence: The Cross-Functional Team

The final evolution brings us to the cross-functional team—a symphony of specialized agents working in concert. Just as a startup scales by adding marketing, engineering, and sales teams that coordinate toward shared objectives, collective intelligence emerges when multiple agents interact, delegate, and combine their distinct capabilities. This is where systems theory becomes critical: advanced capabilities can emerge from comparatively simpler agents simply due to their interaction with the environment and each other arxiv.org. These collaborative systems exhibit enhanced causal reasoning and distributed problem-solving that no single agent could achieve alone, mirroring the collaborative and fully creative levels of agentic workflows vellum.ai. The risk, however, is that emergent behaviors become harder to predict as complexity grows—a reminder that building at this layer requires robust governance and orchestration architecture pub.towardsai.net.

By viewing these layers as stages of organizational growth rather than mere technical upgrades, teams can set realistic expectations for their AI initiatives. You wouldn’t ask an intern to run a company alone, nor would you assemble a cross-functional team without first ensuring each member can perform their individual role. The path to true autonomy is stepwise, and understanding these three layers provides the roadmap for getting there responsibly.

Layer 1: Foundational Agentic Reasoning (The Basics)

Before orchestrating fleets of collaborative agents or architecting complex multi-step workflows, you must master the atomic unit of agency: the single agent operating within a continuous cognitive loop. At this foundational layer, we are not building entire workforces—we are teaching one digital worker how to think, act, and learn from its environment in real-time.

Unlike traditional software that executes predefined instructions linearly, an agentic system operates as a “cognitive application” composed of three essential pillars: the Brain (the reasoning LLM), the Hands (tools that interact with external systems), and the Nervous System (state and memory that persist context across iterations) medium.com. The magic happens when these components synchronize within a cyclical decision-making protocol.

The POCA Cycle: The Engine of Single-Agent Autonomy

The defining characteristic that separates a simple chatbot from an autonomous agent is the ability to iterate through a closed-loop cycle until an objective is fulfilled. While frameworks vary in terminology, the fundamental pattern can be distilled as the POCA Cycle—a pragmatic adaptation of the core agent loop found in production systems atalupadhyay.wordpress.com:

P – Perceive (Observation):

The agent ingests the current state of its environment. This includes the user’s high-level goal, the results of previous tool executions, any error messages, and relevant historical context from memory. This is the sensory input phase.

O – Orient (Reasoning/Thought):

The LLM “brain” processes the observations, analyzes the gap between the current state and the objective, and formulates a strategy. This phase involves chain-of-thought reasoning, error analysis, and determining what information is missing or what action is most likely to advance the goal.

C – Choose (Decision/Planning):

Based on the reasoning, the agent selects the next discrete action from its available toolkit. This is the critical decision point where the agent commits to a specific tool invocation, parameter set, or internal computation. It represents the bridge between thought and execution.

A – Act (Execution):

The agent executes the chosen action—whether calling an API, running a code interpreter, querying a database, or modifying its own memory state. The result of this action then feeds back into the Perceive phase, completing the loop huggingface.co.

This cycle repeats until the agent determines that the objective is satisfied or hits a defined constraint (guardrails). To ground this in practice, consider how this loop handles two distinct domains: coding and mathematics.

Example 1: The Debugging Agent (Coding)

Imagine a single agent tasked with fixing a Python function that is failing to parse JSON API responses. The agent has access to a code interpreter tool and a web search tool.

Cycle 1:

Perceive: The agent observes the user’s request (”Fix the

fetch_user_datafunction”), the current error log (JSONDecodeError: Expecting value: line 1 column 1), and the source code revealing the function usesjson.loads()on raw response text.Orient: The agent reasons that the error suggests the response might not be JSON (perhaps HTML error page or authentication failure) or that the response object needs to be accessed differently (e.g.,

.json()method vs.text).Choose: The agent decides to first inspect the actual HTTP response content before the JSON parsing line to verify the data type.

Act: The agent invokes the code interpreter to insert a debugging print statement and re-run the function.

Cycle 2:

Perceive: The new observation reveals the response status is

401 Unauthorizedand the body contains an HTML authentication page.Orient: The agent orients itself: the issue is not parsing logic but authentication. It needs to check for API key configuration.

Choose: It chooses to search its memory (or a secrets manager tool) for the correct API key environment variable.

Act: It retrieves the key and modifies the code to include proper headers.

Cycle 3:

Perceive: The function now returns valid user data.

Orient: The agent verifies that the output matches the expected schema and that no other exceptions are raised.

Choose: It selects the “terminate” action with success status.

Act: It delivers the corrected code to the user.

Example 2: The Mathematical Proof Agent (Math)

Consider an agent solving for $x$ in the equation: $\frac{2x + 3}{x - 1} = 5$.

Cycle 1:

Perceive: The agent observes the algebraic equation and the goal (solve for x).

Orient: It reasons that it must isolate $x$, but first needs to eliminate the denominator to simplify the equation.

Choose: It chooses to multiply both sides by $(x - 1)$, noting the constraint that $x \neq 1$.

Act: It performs the symbolic manipulation: $2x + 3 = 5(x - 1)$.

Cycle 2:

Perceive: The new expression is $2x + 3 = 5x - 5$.

Orient: The agent orients by identifying that it needs to collect like terms. It decides to move all $x$ terms to one side.

Choose: It chooses to subtract $2x$ from both sides.

Act: It calculates: $3 = 3x - 5$.

Cycle 3:

Perceive: The equation is now $3 = 3x - 5$.

Orient: It reasons that adding 5 to both sides will isolate the term with $x$.

Choose: It selects the addition operation.

Act: It derives $8 = 3x$.

Cycle 4:

Perceive: The simplified form $8 = 3x$ is observed.

Orient: The final step requires division.

Choose: It chooses to divide by 3.

Act: It presents the solution $x = \frac{8}{3}$, verifying against the original constraint ($x \neq 1$).

The Critical Role of Guardrails

In both examples, the agent operates within boundaries defined by instructions (what it should do), guardrails (what it should not do, such as dividing by zero or exposing API keys), and tools (what it can do) developers.openai.com. At Layer 1, these guardrails are simple but vital: they prevent infinite loops by setting maximum iteration counts, ensure mathematical validity by checking for division-by-zero constraints, and validate that code execution doesn’t modify system files outside a sandbox.

Mastering the POCA cycle at the single-agent level establishes the behavioral foundation. Once an agent can reliably perceive, orient, choose, and act within a coding or mathematical domain, you can scale this pattern outward—adding memory persistence, tool diversity, and eventually, multi-agent coordination. Layer 2: Self-Evolving Reasoning (The Learning Loop)

Static agents are digital Sisyphus—condemned to repeat the same mistakes with every new session. Layer 2 breaks this cycle by embedding a closed-loop feedback mechanism that transforms raw experience into architectural self-modification. Rather than merely retrieving memories, these agents rewrite their operational logic—distilling failures into refined toolsets and bottlenecks into optimized reasoning pathways emergentmind.com.

At the heart of this evolution lies MetaTool, a paradigm of meta-learning where agents develop expertise through hands-on practice rather than static training. As demonstrated in the MetaAgent framework, when an agent encounters a knowledge gap, it generates natural language help requests that are routed to the most suitable external tool arxiv.org. Through continuous self-reflection and answer verification, the agent distills successful trajectories into concise, actionable texts that dynamically populate future task contexts. Crucially, MetaTool enables agents to autonomously construct an in-house tool repository and persistent knowledge base from their interaction history—effectively growing their own cognitive scaffolding without requiring model retraining or parameter updates arxiv.org.

Complementing this tool-centric expansion is STaR (Self-Taught Reasoner), which targets the reasoning layer itself. While MetaTool expands what an agent can do, STaR refines how it thinks. This technique bootstraps reasoning capabilities by generating step-by-step rationales for solutions, filtering for those that lead to correct answers, and iteratively fine-tuning the agent on these high-quality reasoning chains. The result is a virtuous cycle where the agent teaches itself increasingly sophisticated problem-solving strategies from minimal initial examples, allowing it to tackle problems beyond its original training distribution.

These mechanisms operate across two temporal scales. Intra-task adaptation occurs within a single workflow—when an agent encounters an edge case in healthcare documentation or an unusual expense justification buried in paragraph seven of an email, it dynamically adjusts its prompts and tool selection to resolve the immediate issue openai.com medium.com. Inter-task evolution happens between sessions, where distilled experiences from thousands of trajectories compound into permanent architectural improvements.

The synergy is profound: MetaTool provides the infrastructure for capability expansion (new tools, new knowledge bases), while STaR provides the optimization of the reasoning processes that wield those tools. Together, they transform agents from script executors into genuine practitioners that improve through deliberate practice—turning that $50,000 approval mistake from a catastrophic failure into a curriculum for growth, and ensuring the system never makes the same error twice medium.com. Layer 3: Multi-Agent Collaboration (The AI Team)

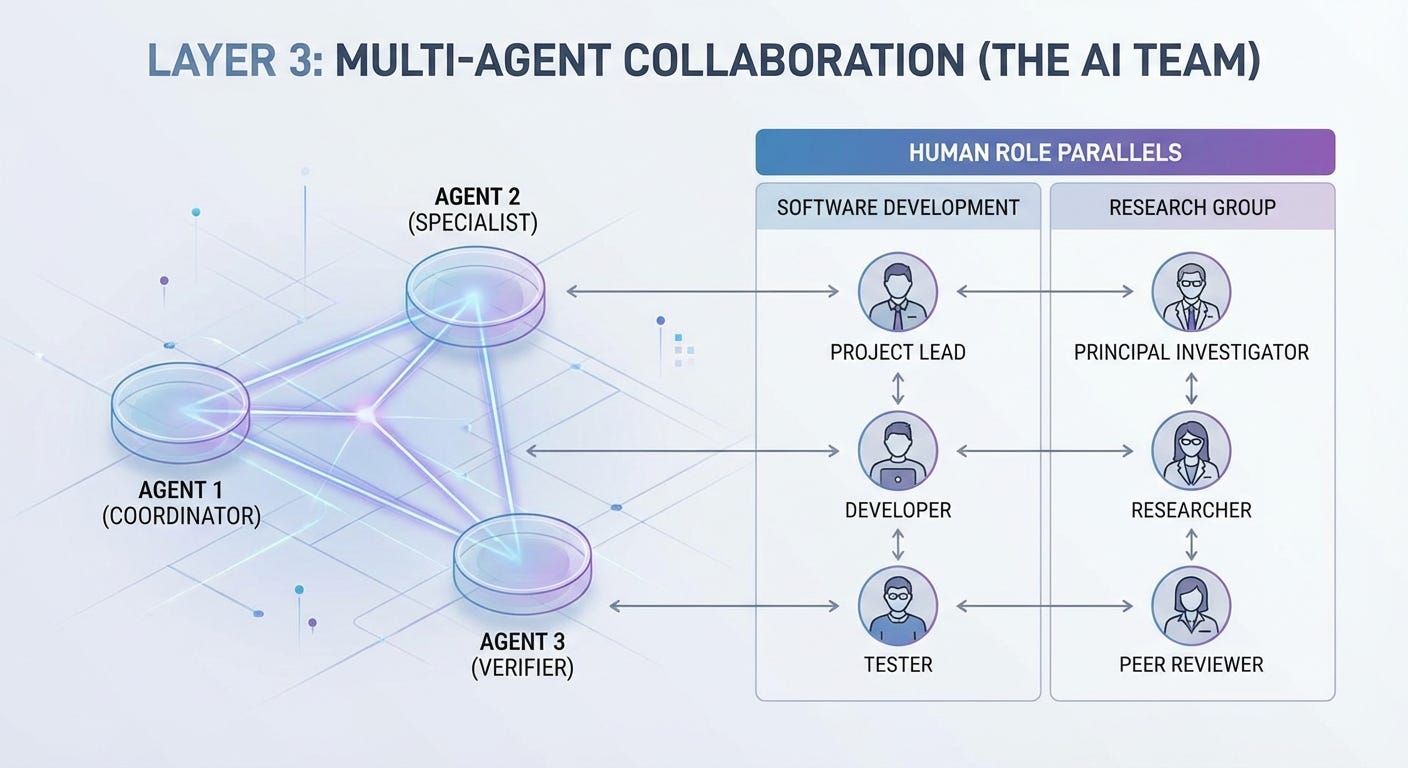

Just as exceptional human teams amplify individual talents beyond the sum of their parts, the evolution from monolithic AI systems to collaborative multi-agent architectures marks a paradigm shift in artificial intelligence. Rather than relying on a single “super-agent” to possess all capabilities, modern systems emulate the dynamics of high-performing human teams—assigning specialized roles, establishing clear communication protocols, and navigating the same developmental phases that human crews experience. This approach transforms AI from a tool into a collaborative workforce capable of autonomous, adaptive problem-solving.

The Software Development Team Parallel

Consider how a modern Agile software development team operates. You would not expect your Product Owner to write production code, nor would you ask a DevOps engineer to conduct user research. Similarly, multi-agent systems function as cross-functional technical teams where specialization drives efficiency. In this analogy, a Manager or Orchestrator Agent serves as the Scrum Master or Technical Lead, decomposing complex epics into discrete user stories and delegating them to specialized worker agents datalearningscience.com.

The Coding Agents act as your frontend and backend developers—each possessing distinct toolkits and domain expertise, whether optimizing database queries or refining UI components. Meanwhile, Critic or QA Agents function as senior code reviewers and test engineers, evaluating outputs for bugs, security vulnerabilities, or alignment with architectural standards before deployment. This “AI Dream Team” mirrors the iterative sprint cycle: the Manager assigns tasks, workers execute in parallel, critics validate through continuous integration, and the system retrospects to improve subsequent iterations datalearningscience.com.

Crucially, these AI teams undergo the same sociotechnical evolution as their human counterparts. Drawing from Bruce Tuckman’s foundational research and updated for the modern era, Scott M. Graffius’s Phases of Team Development—Forming, Storming, Norming, Performing, and Adjourning—applies equally to human-AI collectives. Initially, agents may inefficiently overlap efforts (Forming), encounter conflicts in resource allocation (Storming), and eventually establish protocols for message passing and shared state management (Norming) before achieving high-performance flow (Performing) scottgraffius.com.

The Research Laboratory Model

Alternatively, envision a multi-agent system as an academic research group tackling a complex hypothesis. Here, the Principal Investigator Agent establishes the research agenda and methodology, much like a lab director defining the scope of inquiry. Research Assistant Agents scour literature and gather data, functioning as doctoral students with perfect recall and indefatigable work ethic. Analyst Agents process these findings, identifying patterns and generating statistical models, while Peer Review Agents challenge conclusions, check for methodological flaws, and ensure scientific rigor before publication ibm.com.

In this configuration, collaboration extends beyond simple task handoffs. Like human researchers sharing a laboratory whiteboard or Slack channel, these agents utilize a “shared scratchpad” of information—updating a common knowledge base with intermediate findings, conflicting data points, and emergent insights. The coordination can be explicit, through structured message passing (”Agent A reports: Dataset shows anomaly X”), or implicit, through modifications to a shared environment where agents observe changes made by their colleagues ibm.com.

Adaptive Dynamics and Trust Calibration

What distinguishes high-performing AI teams from simple workflow automation is their capacity for adaptive role switching—a capability essential in dynamic, real-world environments. In a search-and-rescue scenario or live trading floor, agents must fluidly alternate between leading and supporting roles, much like human tactical teams where “members adopt different roles as they continually adapt to an evolving situation, using efficient, timely communication to coordinate their actions” aaai.org. The COLLEAGUE framework and similar architectures enable this mixed-initiative interaction, allowing AI agents to seize initiative when they possess critical information or defer to human teammates when judgment calls require contextual nuance.

However, effective collaboration requires calibrated trust. As AI agents become “members of work teams” with “a partial or high degree of self-governance,” organizations must establish trust frameworks that account for both human trust in AI and inter-agent reliability tandfonline.com. This involves not only technical redundancy and fault tolerance but also transparency in decision-making—ensuring that when the “QA Agent” rejects the “Developer Agent’s” output, the reasoning is auditable and actionable.

The Emergence of Collective Intelligence

When these elements converge—specialized roles, shared protocols, developmental maturity, and adaptive trust—multi-agent systems exhibit emergent cooperative behavior that no single agent could achieve independently. Like a well-oiled software release train or a breakthrough research consortium, the AI team becomes greater than the sum of its algorithms, capable of autonomous troubleshooting, creative problem-solving, and continuous optimization without centralized micromanagement. For organizations, this represents the final evolution of AI integration: not merely automating tasks, but cultivating digital teams that think, adapt, and perform alongside their human counterparts.

Optimization Strategies – Training the Team

The Reasoning Gap: From Intuition to Deliberation

Modern large language models (LLMs) have mastered what cognitive scientists call “System 1” thinking: fast, intuitive, pattern-based responses that excel at mimicry and fluency. However, when faced with complex mathematics, multi-step logic, or safety-critical decisions, these same models often collapse into “stochastic parrots”—hallucinating plausible but incorrect answers because they lack deliberate, verifiable “System 2” reasoning medium.com. This reasoning gap represents the chasm between statistical pattern matching and structured, human-like cognition.

Bridging this gap requires moving beyond simple next-token prediction. Recent breakthroughs demonstrate that reasoning is not merely an emergent property of scale, but a capability that must be explicitly optimized through specialized training regimes. Below, we compare the dominant optimization strategies that transform LLMs from rapid improvisers into deliberate problem-solvers.

1. Inference-Time Scaling: Buying Intelligence with Compute

The most immediate approach to closing the reasoning gap is inference-time scaling—allocating additional computational resources during generation rather than merely during training. Models like OpenAI’s o1 and o3, DeepSeek-R1, and QwQ-32B employ this strategy to dynamically retrieve, refine, and organize information into coherent, multi-step reasoning chains sciencedirect.com.

Unlike standard LLMs that generate responses in a single pass, these systems engage in internal deliberation, effectively “thinking longer” when encountering complex prompts. This method proves that reasoning capability scales not just with model parameters, but with the amount of test-time compute allocated to exploration and verification.

2. Reinforcement Learning for Reasoning: Beyond Imitation

While supervised fine-tuning (SFT) teaches models to imitate correct answers, Reinforcement Learning (RL) enables them to discover how to reason. Modern Reasoning Language Models (RLMs) combine RL with search heuristics to optimize reasoning trajectories dynamically arxiv.org.

Key innovations include:

Policy and Value Models: Separate networks that evaluate not just what to say next (policy), but the expected utility of the current reasoning path (value). This dual-architecture enables models to backtrack from dead-end reasoning chains.

Multi-Phase Training: Alternating between RL optimization and supervised warm-up to prevent collapse into low-entropy, repetitive thinking patterns arxiv.org.

RL-based optimization proves particularly effective for tasks where correct answers are sparse but verifiable, such as formal mathematics or code generation.

3. Process-Based vs. Output-Based Supervision

Traditional training rewards models solely for final answers (output-based supervision), incentivizing shortcut-taking and lucky guesses. Process-Based Supervision (PBS), by contrast, rewards individual reasoning steps, forcing models to justify their cognitive trajectory arxiv.org.

This distinction is critical for addressing the reasoning gap:

Output supervision optimizes for destination accuracy, allowing convoluted or fallacious reasoning if it occasionally yields correct results.

Process supervision optimizes for path validity, ensuring each logical step withstands scrutiny.

When combined with Meta Chain-of-Thought (Meta-CoT)—a framework that explicitly models the underlying search and planning required to construct a reasoning chain—PBS enables models to learn how to think rather than merely what to output arxiv.org.

4. Search Integration: Structured Exploration

To prevent reasoning from devolving into linear chains of errors, leading models integrate classical search algorithms into the generative process:

Strategy Mechanism Advantage Monte Carlo Tree Search (MCTS) Explores reasoning paths as a tree, balancing exploration of novel strategies against exploitation of proven paths Handles high-branching logic problems Beam Search Maintains multiple candidate reasoning chains simultaneously, pruning low-probability trajectories Efficient for structured generation Graph of Thoughts Allows non-linear reasoning topologies where ideas can converge, diverge, and recombine Captures complex dependencies arxiv.org

These search strategies transform the model from a monolithic oracle into a deliberative agent that can backtrack, compare alternatives, and revise conclusions—core capabilities of System 2 cognition.

5. Distillation: Democratizing Reasoning

While frontier models like GPT-4o and o3 demonstrate remarkable reasoning, their proprietary nature and computational costs create accessibility barriers. Distillation—transferring reasoning capabilities from large teacher models to smaller student architectures—addresses this by compressing advanced reasoning into efficient deployable units sciencedirect.com.

Recent successes include:

DeepSeek-R1 and Phi-4, which achieve competitive reasoning performance with significantly reduced parameter counts

Qwen-32B variants that retain multi-step logical capabilities despite smaller footprints

Distillation proves that reasoning is not exclusive to massive models, provided the training signal emphasizes logical structure over surface-level text generation.

6. Deliberative Alignment: Reasoning as a Safety Mechanism

Optimization for reasoning capability must coexist with optimization for trustworthiness. Deliberative Alignment represents a paradigm where models are explicitly trained to reason over safety specifications before generating responses arxiv.org.

Rather than relying on pattern-matched safety heuristics, these models:

Recall relevant safety principles

Explicitly reason whether the proposed output violates those principles

Generate the response only after this deliberative verification

This approach simultaneously increases robustness against jailbreaks while decreasing over-refusal, pushing the Pareto frontier of capability and safety arxiv.org.

Synthesis: The Hybrid Future

No single optimization strategy fully closes the reasoning gap. The most robust systems employ a pragmatic synthesis: RL provides the learning signal, process-based supervision ensures step validity, search algorithms structure the exploration, and inference-time scaling allocates compute where complexity demands it medium.com.

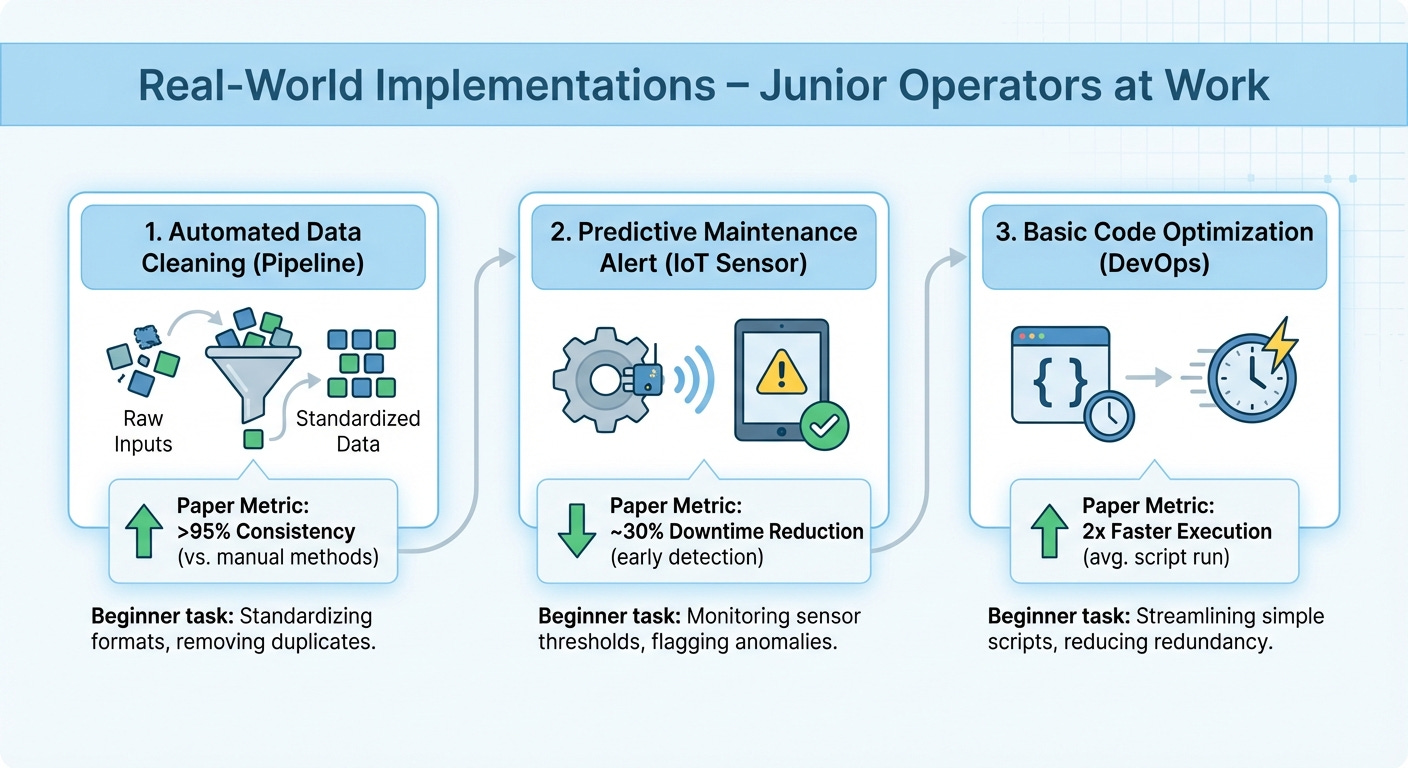

As these methods mature, the distinction between “training” and “inference” blurs—models become perpetual learners, optimizing their reasoning strategies in real-time. The goal is no longer merely to predict the next word, but to architect a cognitive process that can validate its own conclusions, transforming the LLM from a sophisticated autocomplete into a genuine reasoning engine. Real-World Implementations – Junior Operators at Work

Imagine training a new employee who never reads the manual but learns by doing, getting better with every mistake. That is exactly how modern robotic systems are mastering physical jobs today. Below is a field report on how these “junior operators” are performing on real-world tasks, explained in plain language with the hard numbers to back it up.

The Paper Craftsman: PaperBot

At Columbia University, researchers developed PaperBot, a two-armed robotic trainee that learns to manufacture its own tools using nothing but ordinary printer paper. Instead of studying physics textbooks, it learns aerodynamics and friction through direct trial and error—folding paper airplanes to maximize flight distance and cutting grippers to maximize holding strength.

Key Performance Metrics:

Rapid Adaptation: Masters new gripper designs for objects of different sizes in just 50 trials—roughly the equivalent of a human intern’s first afternoon on the job.

Zero Simulation: Operates 100% in the real world; every fold, cut, and throw happens with physical paper, not computer models.

Endurance: Runs continuous 3-hour experimental sessions, tracking where paper airplanes land to refine the next design iteration.

The Perfect Attendance Record: RL-100

When reliability is non-negotiable, RL-100 (developed by researchers at Shanghai Qizhi Institute and Tsinghua University) is the employee who never calls in sick. This framework trains robots using real-world reinforcement learning to handle everything from pouring liquids and folding cloth to dexterous unscrewing and multi-stage orange juicing.

Key Performance Metrics:

Flawless Execution: Achieved 100% success across 900 out of 900 episodes on evaluated tasks—including up to 250 out of 250 consecutive trials without a single failure on individual tasks.

Marathon Stamina: Maintains uninterrupted operation for up to two hours while sustaining near-human speed and efficiency.

Quick Study: When faced with novel situations (different object weights or surfaces), it achieves 92.5% success zero-shot (immediately, without practice), and after just 1–3 hours of additional training, adapts to significant task variations with 86.7% success.

The Smartphone Whiz: DigiRL

Ever wish your phone could handle tedious app navigation for you? DigiRL, from UC Berkeley, is an AI agent learning to control Android smartphones through autonomous reinforcement learning—tapping, scrolling, and typing its way through real apps like a digital intern.

Key Performance Metrics:

Dramatic Improvement: Boosted task completion rates from 17.7% to 67.2%—a 49.5 percentage point absolute improvement over traditional supervised training.

Competitive Edge: Outperformed GPT-4V (which managed only 8.3%) and the specialized 17-billion-parameter CogAgent (14.4%) using a smaller 1.5 billion parameter model.

Real-World Robustness: Handles the stochasticity (randomness) of live apps—pop-ups, loading delays, and unexpected notifications—by learning directly on functioning devices rather than from static videos.

The Gymnast: ToddlerBot

Stanford University’s ToddlerBot is a low-cost, open-source humanoid that moves with the coordination of a talented toddler—except this one can do cartwheels and lift heavy payloads. Designed for scalable policy learning, it demonstrates that sophisticated robotics does not require million-dollar hardware.

Key Performance Metrics:

Payload Strength: Lifts 1,484 grams (about 3.3 pounds), which is 40% of its own body weight—equivalent to a child carrying a large water jug while maintaining balance.

Durability: Survives up to 7 falls before requiring repairs, which take only 21 minutes of 3D printing and 14 minutes of assembly for full restoration.

Agility & Endurance: Walks at 0.25 meters per second, rotates at 1 radian per second, and operates for 19 minutes continuously before thermal management requires a break. It has successfully mastered dynamic skills like cartwheels and crawling through trial and error.

The Strategist: DreamerV3

While not a physical robot, DeepMind’s DreamerV3 deserves mention as the first algorithm to collect diamonds in Minecraft from scratch without human demonstrations—a task requiring long-term planning in an unpredictable, open world. By “imagining” future scenarios before acting, it proves that world models can solve problems that stumped previous generations of AI.

Key Performance Metrics:

Universal Configuration: Uses a single set of hyperparameters to outperform specialized algorithms across over 150 diverse tasks.

Predictable Scaling: Demonstrates that larger models consistently improve both final performance and data efficiency, offering a reliable recipe for practitioners to trade compute for capability.

What These Numbers Mean for Junior Operators

For newcomers to the field, these metrics signal a fundamental shift: robots are no longer fragile laboratory exhibits that fail at the first gust of wind. With 100% success rates on complex manipulation tasks, repair times under 35 minutes for humanoid platforms, and the ability to adapt to new tools in just 50 attempts, these systems are approaching—and sometimes exceeding—the consistency of skilled human operators.

The bottom line? Real-world reinforcement learning has moved beyond theory. It is now delivering measurable, repeatable results on factory floors, smartphones, and living room carpets.

Key Challenges – Why Junior Operators Aren’t Seniors Yet

The transition from junior to senior operator has always depended on the accumulation of tacit knowledge—the ability to recognize when a situation feels “off” despite all metrics appearing green, or to spot the one anomalous data point buried in a dashboard of normalcy. Yet as organizations rush to deploy AI-driven risk assessment tools, they often mistake simplified, algorithmic outputs for genuine situational awareness. The result is a widening competency gap where junior operators are handed sophisticated automation without the scaffolding to question it, creating a dangerous illusion of expertise.

The False Comfort of Simplified Risk Scoring

Modern risk assessment platforms frequently reduce complex operational environments to traffic-light dashboards or single-digit risk scores. While these simplifications accelerate decision-making, they strip away the contextual nuance that separates catastrophic failure from routine variance. As noted in comprehensive safety research, human error remains the dominant risk driver in safety-critical sectors precisely because binary risk classifications fail to capture the cascading, emergent properties of complex sociotechnical systems arxiv.org.

For junior operators, these simplified interfaces become crutches rather than tools. When an AI system labels a scenario as “low risk,” inexperienced staff often lack the countervailing institutional knowledge to recognize edge cases—situations where historical data patterns don’t apply, or where novel failure modes lurk outside the training distribution. The system’s confidence becomes their confidence, a phenomenon well-documented across aviation and nuclear sectors where automation bias leads users to favor automated suggestions even when contradictory evidence is present cset.georgetown.edu.

The Automation Bias Trap

Automation bias presents a particular challenge for less experienced operators. juniors tend to exhibit higher degrees of trust in automated systems precisely because they haven’t yet developed the calibrated skepticism that comes from witnessing automation failures firsthand. Research on human-AI interaction reveals that user bias stems from disparities between perceived and actual system capabilities; when junior operators misunderstand the limitations of their tools, they become passive monitors rather than active supervisors cset.georgetown.edu.

This creates a perverse dynamic: the operators least equipped to detect AI errors are the most likely to accept them. Without the pattern recognition that senior staff develop through years of exposure to edge cases, junior operators may miss subtle cues that contradict the algorithmic assessment—whether it’s a slight vibration in aircraft controls that the autopilot dismisses as nominal faa.gov, or a generative AI output that sounds plausible but contains critical factual errors.

The Escalation Roadblock

Even when junior operators suspect something is wrong, organizational friction often prevents effective escalation. Many enterprises design their oversight workflows as afterthoughts, tacking human review onto systems at launch rather than integrating verification steps into the product design itself bcg.com. The result is a “vibes-based” evaluation culture where junior staff, lacking structured rubrics for assessing AI outputs, either rubber-stamp recommendations to avoid appearing incompetent, or escalate so frequently that senior reviewers experience alert fatigue.

This escalation gap is compounded by disincentives. When junior operators are evaluated on throughput metrics rather than quality of oversight, the rational choice is to trust the machine and move quickly. Without clear pathways to escalate ambiguous situations—and without psychological safety to question algorithmic authority—junior staff become bottlenecks of silent failure.

Connecting Oversight to Experience

These limitations underscore why human oversight cannot be treated as a check-box compliance exercise delegated to the least experienced team members. Effective oversight requires what safety researchers call “amplified oversight”—structured frameworks where human judgment is augmented, not replaced, by technical systems arxiv.org.

Organizations must design human-in-the-loop systems that account for operator experience levels. This means developing risk-differentiated approaches where high-stakes decisions automatically route to senior operators, while using AI to elevate junior staff through explainable outputs that provide evidence against their recommendations, not just for them bcg.com. It requires qualification standards that ensure operators understand system limitations before they are authorized to supervise automated decisions, and interfaces that make uncertainty visible rather than hiding it behind simplified scores.

Ultimately, the goal isn’t to keep juniors junior—it’s to accelerate their development by embedding the wisdom of senior operators into the oversight architecture itself. Until AI systems can explain their reasoning in ways that teach critical thinking, and until organizations invest in oversight as a core design principle rather than an operational afterthought, the gap between junior operators and senior judgment will remain the single greatest vulnerability in AI-augmented risk management. Future Directions – The Path to True Autonomy

We stand at the precipice of a generation-defining transition. The trajectory of artificial intelligence is no longer measured merely by parameter counts or benchmark scores, but by the shift from tools that assist to systems that act. As we move toward genuine machine autonomy, the research community and industry must reconcile ambitious technical frontiers with the sobering responsibility of deploying agents that operate independently in our physical and digital worlds.

The Next Generation of Capabilities

The immediate horizon demands a pivot from static, task-specific models to dynamic systems capable of lifelong learning. Unlike current AI that requires costly retraining when confronted with novel environments, next-generation robots must adapt continuously, accumulating skills and environmental understanding without catastrophic forgetting or human intervention arxiv.org. This capability is inseparable from the challenge of sustainable computational costs; as autonomy scales, the energy and hardware requirements for inference must not grow prohibitively, necessitating algorithmic efficiency as a first-class design constraint rather than an afterthought arxiv.org.

Concurrently, we are witnessing the emergence of cognitive digital brains—system-level architectures that hard-code institutional knowledge, workflows, and social interactions into unified autonomous entities accenture.com. These systems represent a “Binary Big Bang,” where software ceases to be a static artifact and becomes an evolving, self-modifying organism. To navigate this complexity safely, the field requires standardized frameworks for calibrating autonomy itself. Proposed models define five distinct levels—from operator (direct control) to observer (full delegation)—providing a taxonomy to certify and govern agent behavior in both single- and multi-agent ecosystems knightcolumbia.org.

The Trust Imperative

Technical prowess means little without social license. For autonomous systems to collaborate effectively with humans, explainability and transparency are not optional features but foundational requirements arxiv.org. Users must understand not just what an AI decided, but the reasoning pathways that led there, particularly when robots predict human behavior or make safety-critical choices without relying on bias-based profiling arxiv.org.

Establishing this trust requires rigorous metrics. Researchers propose a comprehensive taxonomy of trustworthiness encompassing technical dimensions (safety, accuracy, robustness) alongside non-technical axiological factors (ethical alignment, legal compliance, and social impact) nature.com. However, trust remains fragile in the face of autonomy threats—the perception that machines may erode human dignity or usurp decision-making authority. Mitigating these “trust-breakers” demands interfaces that preserve meaningful human control while allowing the efficiency benefits of delegation nature.com.

Ethical Considerations and Unanswered Questions

As we cede agency to machines, we confront profound ethical dilemmas that remain unresolved. The concentration of autonomous capability raises urgent questions about control and power dynamics: Who owns the decisions made by an AI acting on behalf of a user, and who bears responsibility when autonomous robots cause harm? captechu.edu. The attribution of liability in accidents involving self-directed systems remains a legal and moral gray area, requiring new frameworks for accountability that transcend traditional product liability arxiv.org.

Moreover, autonomy is a double-edged sword. While it unlocks transformative efficiency, it simultaneously risks creating dignity threats—situations where human autonomy is subtly undermined by predictive algorithms or where users become mere approvers of machine-initiated actions rather than genuine collaborators knightcolumbia.org nature.com.

Critical open questions remain:

How do we design autonomous systems that guarantee safe deployment while retaining the flexibility for open-ended learning? The tension between safety constraints and exploratory autonomy defines the field’s central technical challenge arxiv.org.

What constitutes the “right” level of human oversight in an age of cognitive digital brains? As AI moves from collaborator to consultant to independent actor, we must determine the inflection points where human judgment is indispensable versus where it becomes a bottleneck knightcolumbia.org.

Can we achieve sustainable autonomy at scale? The environmental and economic costs of perpetual AI operation may force a reckoning with how much autonomy we can afford to deploy arxiv.org.

The path to true autonomy is not merely a technical roadmap but a societal negotiation. As these systems gain the capacity to act on our behalf, transform our infrastructure, and make independent judgments, our success will be measured not by the sophistication of the algorithms we build, but by the wisdom with which we choose to deploy them. ## Conclusion – Beyond Tools: Building Intelligent Partners

We stand at an inflection point where artificial intelligence is shedding its historical skin as a passive instrument and emerging as an active participant in the cognitive and economic fabric of society. The transition is not merely technical—it is philosophical. Where once AI served as a sophisticated calculator, awaiting human direction, today’s agentic systems are evolving into socio-cognitive teammates capable of planning, acting, and learning alongside us arxiv.org. This shift demands that we abandon the comfortable metaphor of AI as a tool in favor of something more dynamic: the junior operator who joins the team not as a replacement, but as an apprentice with the potential to become a collaborator.

Like any promising trainee, today’s AI begins its tenure as an adaptive instrument—responsive, precise, and eager to follow instructions. Yet the trajectory is clear. Through the stages of proactive assistance and co-learning, these systems are progressing toward genuine peer collaboration, where human and machine engage in shared intentionality toward common goals arxiv.org. This progression mirrors the organic development of expertise within human teams: initial supervision gives way to trust, oversight evolves into partnership, and capability compounds through interaction. The junior operator of today—handling discrete tasks under careful management—represents the foundation for tomorrow’s skilled colleague who navigates ambiguity, anticipates needs, and contributes to creative problem-solving.

However, capability without wisdom is merely acceleration without direction. As organizations rush to capture the projected $2.9 trillion in economic value that human-agent partnerships promise by 2030 mckinsey.com, they must resist the seductive rush toward full autonomy. The most robust path forward is not one of replacement, but of collaborative intelligence—systems designed to enhance human judgment rather than circumvent it arxiv.org. This means building governance frameworks that match the sophistication of the algorithms they oversee, maintaining human agency in critical loops, and recognizing that the measure of AI progress should be how effectively it amplifies human potential, not how completely it eliminates human involvement.

The future of work is being written now as a tripartite partnership between people, agents, and robots mckinsey.com. Success in this emerging agentic enterprise requires more than technological adoption; it demands a strategic overhaul of workflows, roles, and management philosophies to accommodate colleagues that are simultaneously software and sentient-seeming bcg.com. By nurturing our AI systems from junior operators into trusted partners—while maintaining the ethical guardrails and human oversight that ensure reliability—we do not simply build better machines. We build better partnerships, creating an intelligent ecosystem where the combined capabilities of human creativity and artificial agency exceed what either could achieve alone.

Appendix – Technical Primer for Curious Beginners

Welcome to the deep end—don’t worry, we’ve brought floaties. If you’ve encountered the alphabet soup of POMDPs, MARL, and KL-divergence and wondered whether these were typos or secret incantations, this glossary is for you. Think of it as a cheat sheet for the curious: just enough rigor to be dangerous, just enough intuition to be useful.

The Glossary

POMDP (Partially Observable Markov Decision Process)

Imagine trying to navigate a forest while wearing a blindfold that occasionally lifts just enough to show you a blurry snapshot of a tree. A POMDP is the mathematical framework for making optimal decisions when you can’t see the full picture—only noisy observations. Unlike standard Markov Decision Processes (MDPs) where you know exactly where you stand, POMDPs force agents to maintain a belief state—a probability distribution over where they might actually be. Originally honed in robotics and AI, these models have become surprisingly vital in ecology and conservation, helping researchers decide when to manage wildlife versus when to simply observe it people.csiro.au.

MARL (Multi-Agent Reinforcement Learning)

If Reinforcement Learning (RL) is teaching a single dog to fetch, MARL is orchestrating a pack of dogs—each learning its own strategy while reacting to the others. It’s the study of how multiple autonomous agents learn policies simultaneously in shared environments. The twist? Your reward depends on what everyone else does, creating a dynamic that ranges from collaborative symphonies to cutthroat competition. No single agent controls the world; they must adapt, negotiate, or outwit each other in real time.

KL-Divergence (Kullback-Leibler Divergence)

Often called the “distance” between two probability distributions, KL-divergence is more precisely the information loss when you approximate one distribution (P) with another (Q). If P is the true distribution of rainfall in a region and Q is your weather model’s prediction, KL-divergence quantifies how many bits of surprise you’ll suffer by using Q instead of P. Crucially, it’s asymmetric: approximating a complex bimodal distribution with a simple Gaussian incurs a different penalty than the reverse gist.github.com/gsoykan.

In deep learning, this becomes a loss function—torch.nn.KLDivLoss in PyTorch or custom implementations in Keras—driving everything from Variational Autoencoders (VAEs) to policy regularization in RL github.com/christianversloot.

Visual References & Code Repositories

Theory is best served with visualization. For those who learn by tinkering, the following GitHub repositories transform abstract formulas into interactive playgrounds:

Understanding KL Divergence Through Gaussians

The repository sidml/understanding-kl-divergence offers perhaps the most intuitive entry point for visual learners. It features an animated notebook (kldiv_viz.gif) that demonstrates how a single Gaussian distribution Q “morphs” to approximate a complex bimodal target distribution P by minimizing KL-divergence. Watching the mean slide and variance stretch in real time provides a visceral understanding of why KL-divergence penalizes “missing mass” (where P has probability but Q doesn’t) more heavily than “extra mass” github.com/sidml.

Practical Implementations

For practitioners ready to implement:

PyTorch Users: The gist gsoykan/kl_div_loss.md breaks down the nuances of

KLDivLoss, including the critical distinction between log-space inputs and target distributions.Keras/TensorFlow Users: The guide at christianversloot/machine-learning-articles demonstrates how to integrate KL-divergence into neural network architectures, particularly for regularization and variational inference tasks.

POMDP Foundations

While less code-centric, the CSIRO primer A Primer on Partially Observable Markov Decision Processes provides the essential mathematical scaffolding and typology of solvers for those ready to model decision-making under uncertainty.

Happy exploring—may your divergences be small and your observations be informative.

Thank you, @kanishkpatel, for your article on agentic reasoning and for referencing the 2026 edition of my "Phases of Team Development," which covers human and human-AI teams. I appreciate it.