AI agents forget everything between sessions.

MemVerse gives them a brain that thinks fast AND slow.

TL;DR

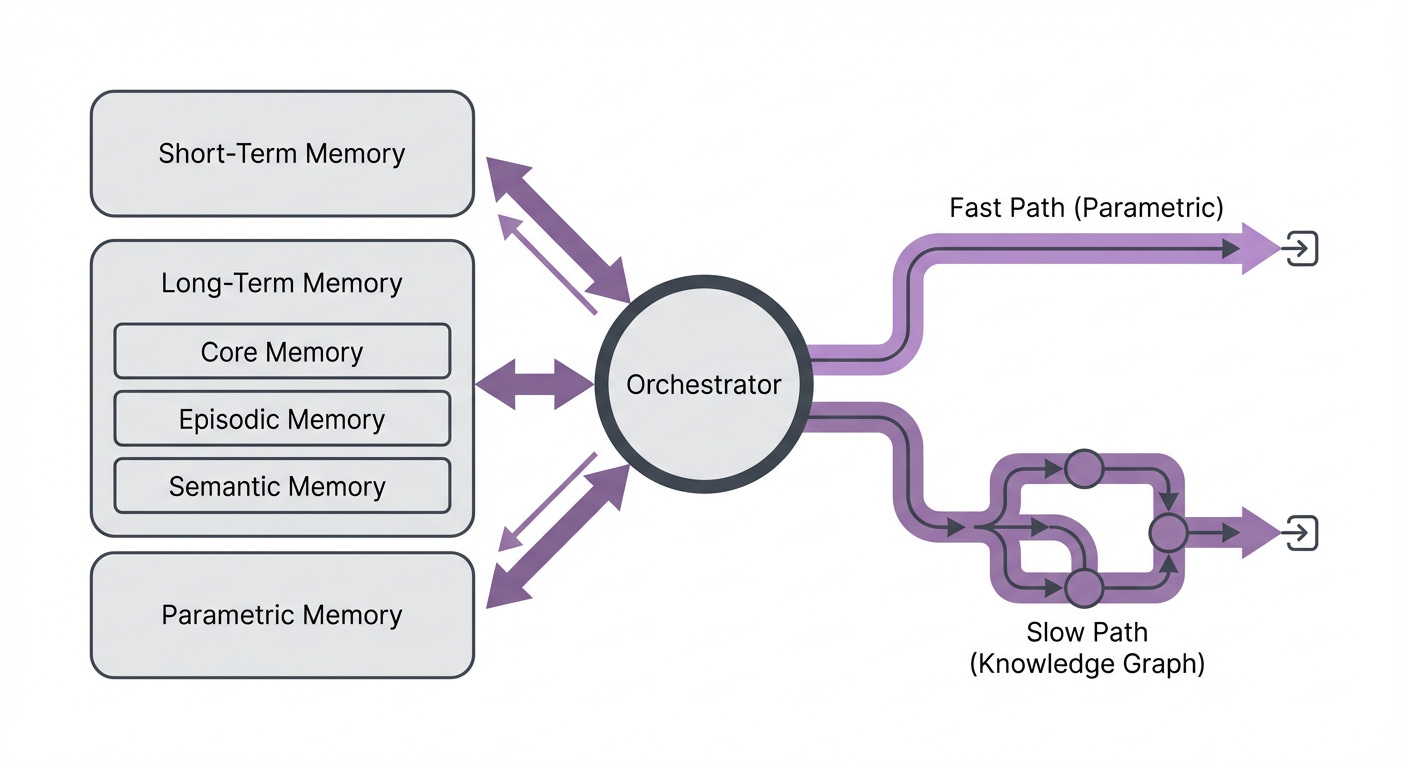

MemVerse, an open-source framework from Shanghai AI Lab, gives AI agents lifelong memory through a dual-memory architecture: parametric memory for fast intuitive recall (inspired by Kahneman’s System and knowledge graphs for slow deliberative reasoning (System 2). Three persistent layers (short-term, long-term, parametric) are orchestrated through a central controller. The result: 89% faster retrieval than RAG, 90% reduction in token usage through intelligent distillation, and agents that actually learn and adapt across conversations.

If you’ve built AI agents, you’ve hit the wall: they’re amnesiacs. Each session resets. Each conversation starts from zero. They can’t grow, adapt, or learn from experience. You can throw long context windows at the problem, but that’s a band-aid. What if agents had actual memory?

MemVerse, released by Shanghai AI Lab, takes a serious swing at this. It’s not a toy. It’s an open-source framework with a real architecture: three distinct memory layers, a central orchestrator, and a clever dual-pathway system for retrieval. When you combine that with knowledge graphs, supervised distillation, and intelligent compression, you get something that actually works.

But before you declare this the future of agentic AI, we need to talk about what “multimodal memory” really means here. Spoiler: images don’t stay images.

The architecture that mimics human memory

MemVerse borrows from cognitive science. Your brain has fast and slow thinking (Kahneman’s framework). MemVerse does too, except it calls them parametric memory and knowledge graph retrieval.

Start with the three layers:

Short-term memory works like a sliding window. It keeps the N most recent interactions available for immediate context. Cheap to maintain. Useful for sequential tasks where recent history matters. But it doesn’t scale and doesn’t generalize.

Long-term memory is where the real structure lives. This layer uses knowledge graphs across three dimensions:

Core memory stores durable user facts and preferences. Things that shouldn’t change.

Episodic memory captures time-ordered events: “On March 15, the user asked about model quantization, and we discussed it for 30 minutes.”

Semantic memory holds generalizable knowledge: entity relationships, abstract concepts, patterns the agent can reuse across conversations.

All three are persistent. All three maintain references back to the original content chunks. This matters because it means you can audit and debug what the agent remembers.

Parametric memory is the clever part. It’s a lightweight language model that gets periodically fine-tuned on the high-value stuff from long-term memory. Think of it as distillation: compress the essential knowledge into model weights. This gives you instant, differentiable recall without touching a database.

Then comes the orchestrator. It acts like your prefrontal cortex: managing add, update, delete, and retrieval operations across all three layers. Everything flows through it.

The fast and slow paths of reasoning

Here’s where the Kahneman influence shows up clearly.

The fast path uses parametric memory. An agent needs to recall something? The parametric model does forward inference. It’s intuitive, it’s fast, it’s differentiable. No graph traversal. No database queries. Just weights and matrix multiplication. This is your System 1 thinking: automatic, pattern-matching, confident. An agent can retrieve relevant knowledge in a single forward pass and integrate it immediately into the response. For common queries, frequent patterns, or time-sensitive decisions, this speed is everything.

The slow path uses the knowledge graphs. When the agent needs to deliberate, to reason through complex connections, it traverses the graph structure. This is slower but more reliable. It can follow chains of reasoning. It can explain its retrieval because the path is explicit. An agent following the slow path can distinguish between core facts, episodic events, and semantic relationships. It can say: “This came from a specific conversation on March 15” or “This pattern emerged across five different contexts.” It’s your System 2 thinking: deliberate, traceable, justified.

The framework lets you switch paths or combine them depending on the task. For a customer service agent handling routine inquiries, you lean fast. For a research assistant building a complex argument, you go slow. For a reasoning-heavy task, you might use fast retrieval to seed the initial context and then slow traversal to build connections. That flexibility is underrated in memory system design. Most frameworks force a choice. MemVerse lets you pick the tool that fits the moment.

The multimodal angle (and a note on the bottleneck)

MemVerse’s paper frames this as a “multimodal memory framework,” and it does accept images, audio, and video. However, like most systems in this space, it converts all multimodal inputs into text captions before storing them in the knowledge graphs and other memory layers. Images become GPT-4o-mini captions. Audio becomes Whisper transcriptions. Everything flows through a text-centric architecture.

This tradeoff works well for knowledge retention and retrieval, but it does flatten some information. We’ll dive deeper into why this text-to-knowledge bottleneck matters across the field in Wednesday’s article.

Performance: where it actually shines

The numbers matter less than the mechanics, but they’re still worth seeing.

On ScienceQA (requires visual reasoning), GPT-4o-mini improved from 76.82% to 85.48% accuracy with MemVerse. On MSR-VTT (text-to-video retrieval), MemVerse hit 90.4% recall@1, crushing CLIP (29.7%) and ExCae (67.7%). That’s not marginal.

The speed gains are real too. Memory retrieval runs 89% faster than standard RAG. Even just the long-term memory alone is 72% faster than naive approaches. The distillation process cuts token usage by 90%, which matters if you’re running this at scale.

But the paper also surfaces the limits honestly. Short-term memory barely helps on non-sequential tasks. Smaller models (like Qwen) struggle to effectively use retrieved context. The architecture works best when prompts are carefully designed to help the agent integrate memories. And frontier models benefit more than weaker ones, which means scaling this isn’t trivial.

What this means for agentic systems

The real contribution isn’t any single idea. It’s the orchestration. Bringing together parametric memory, knowledge graphs, dual retrieval paths, and periodic distillation into one coherent system is non-trivial engineering. Most memory proposals solve one problem in isolation. MemVerse solves several simultaneously: how to store, organize, retrieve, and compress knowledge in ways that match how agents actually reason.

If you’re building agents that need to learn from conversations, this is worth understanding deeply. You get persistence without infinite context windows (the parametric path handles compression). You get fast recall for common queries and deliberative recall for complex reasoning (dual paths). You get compression that actually works because you’re fine-tuning the parametric model on what genuinely matters. You get traceability because knowledge graph edges can be audited. You get a system that scales because smaller queries use the fast path and expensive reasoning uses the slow path selectively.

The architecture also solves a coordination problem. In most systems, memory management is either monolithic (everything goes to the same RAG) or ad-hoc (engineers wire up multiple systems). MemVerse’s central orchestrator means consistent update semantics, unified deletion operations, and predictable retrieval. When your long-term memory gets stale, you can trigger re-distillation into the parametric layer. When short-term memory fills up, it automatically evicts. The system degrades gracefully rather than breaking.

The open-source implementation supports Docker, runs as an MCP server, and exposes OpenAI-compatible APIs. That’s not accidental. The authors engineered this to be usable, not just publishable. If you’re already using Claude or GPT as your agent backbone, you can drop MemVerse in as a memory layer.

The honest take: this isn’t a silver bullet for agent memory. It’s a coherent framework that solves real engineering problems without overselling what it does. The dual-memory architecture is the genuine innovation. The multimodal angle is useful but secondary (everything becomes text, which is fine). If you’ve been frustrated by stateless agents that can’t learn or adapt, MemVerse is worth a serious look.

References and Further Reading

MemVerse: Neural Persistent Memory with Multimodal Knowledge Graphs - Shanghai AI Lab

MemVerse GitHub Repository - Open-source implementation with Docker and MCP support

Thinking, Fast and Slow - Daniel Kahneman (foundational for dual-system cognition)

Knowledge Graphs in AI: A Review - Foundational concepts for structured memory representation

Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks - Lewis et al. (context for RAG comparison)

Vision-Language Models and Multimodal Understanding - OpenAI CLIP and successor models

Model Distillation for Compression and Efficiency - Foundational work on knowledge distillation principles

The System 1 / System 2 framing for memory types is clean. What I found in Anthropic's leaked source this week is that they landed on three layers instead of two: in-context working memory, a persisted CLAUDE.md-style file, and knowledge stored in tools and databases.

The middle layer is doing most of the heavy lifting for session continuity. Broke it down at https://thoughts.jock.pl/p/claude-code-source-leak-what-to-learn-ai-agents-2026 The autoDream consolidation process writes back to that middle layer on a timer. That's the piece that makes persistence actually work across long sessions.